Biblio du mois : Avril 2019

Variété avant l’été !

Et oui, Au programme, des sujets bien divers mais tout aussi intéressant à discuter pour vos prochaines biblios 😉

Et quand les grandes revues parlent d’Anesthésie-Réanimation, on l’ajoute à sa biblio du Mois de l’AJAR Paris !

Cirrhose avec de la physiopath, Intoxications avec guide thérapeutique, Gestion de FA, Discussion type d’AG en chirurgie cardiaque, SDRA sous toutes ses coutures, Discussion de coro post-ACR, de l’ALR avec des études randomisées et du sepsis !

Avec Nos sujets de prédilection : Deep Learning, Bien-être et une Belle revue sur le Management des patients en état de mort encéphalique !

Enjoy ! <3

AG par voie inhalée ou par voie IV en chirurgie cardiaque ?

Landoni, et al.

N Engl J Med 2019; 380:1214-1225

DOI: 10.1056/NEJMoa1816476

Background

Volatile (inhaled) anesthetic agents have cardioprotective effects, which might improve clinical outcomes in patients undergoing coronary-artery bypass grafting (CABG).

Methods

We conducted a pragmatic, multicenter, single-blind, controlled trial at 36 centers in 13 countries. Patients scheduled to undergo elective CABG were randomly assigned to an intraoperative anesthetic regimen that included a volatile anesthetic (desflurane, isoflurane, or sevoflurane) or to total intravenous anesthesia. The primary outcome was death from any cause at 1 year.

Results

A total of 5400 patients were randomly assigned: 2709 to the volatile anesthetics group and 2691 to the total intravenous anesthesia group. On-pump CABG was performed in 64% of patients, with a mean duration of cardiopulmonary bypass of 79 minutes. The two groups were similar with respect to demographic and clinical characteristics at baseline, the duration of cardiopulmonary bypass, and the number of grafts. At the time of the second interim analysis, the data and safety monitoring board advised that the trial should be stopped for futility. No significant difference between the groups with respect to deaths from any cause was seen at 1 year (2.8% in the volatile anesthetics group and 3.0% in the total intravenous anesthesia group; relative risk, 0.94; 95% confidence interval [CI], 0.69 to 1.29; P=0.71), with data available for 5353 patients (99.1%), or at 30 days (1.4% and 1.3%, respectively; relative risk, 1.11; 95% CI, 0.70 to 1.76), with data available for 5398 patients (99.9%). There were no significant differences between the groups in any of the secondary outcomes or in the incidence of prespecified adverse events, including myocardial infarction.

Conclusions

Among patients undergoing elective CABG, anesthesia with a volatile agent did not result in significantly fewer deaths at 1 year than total intravenous anesthesia.

Coro après un ACR sans sus-décalage du segment ST ?

Lemkes,et al.

https://www.nejm.org/doi/full/10.1056/NEJMoa1816897?query=TOC

N Engl J Med 2019; 380:1397-1407

DOI: 10.1056/NEJMoa1816897

En vidéo : https://www.nejm.org/doi/full/10.1056/NEJMdo005490

Background

Ischemic heart disease is a major cause of out-of-hospital cardiac arrest. The role of immediate coronary angiography and percutaneous coronary intervention (PCI) in the treatment of patients who have been successfully resuscitated after cardiac arrest in the absence of ST-segment elevation myocardial infarction (STEMI) remains uncertain.

Methods

In this multicenter trial, we randomly assigned 552 patients who had cardiac arrest without signs of STEMI to undergo immediate coronary angiography or coronary angiography that was delayed until after neurologic recovery. All patients underwent PCI if indicated. The primary end point was survival at 90 days. Secondary end points included survival at 90 days with good cerebral performance or mild or moderate disability, myocardial injury, duration of catecholamine support, markers of shock, recurrence of ventricular tachycardia, duration of mechanical ventilation, major bleeding, occurrence of acute kidney injury, need for renal-replacement therapy, time to target temperature, and neurologic status at discharge from the intensive care unit.

Results

At 90 days, 176 of 273 patients (64.5%) in the immediate angiography group and 178 of 265 patients (67.2%) in the delayed angiography group were alive (odds ratio, 0.89; 95% confidence interval [CI], 0.62 to 1.27; P=0.51). The median time to target temperature was 5.4 hours in the immediate angiography group and 4.7 hours in the delayed angiography group (ratio of geometric means, 1.19; 95% CI, 1.04 to 1.36). No significant differences between the groups were found in the remaining secondary end points.

Conclusions

Among patients who had been successfully resuscitated after out-of-hospital cardiac arrest and had no signs of STEMI, a strategy of immediate angiography was not found to be better than a strategy of delayed angiography with respect to overall survival at 90 days.

Pas d’urgence pour une cardioversion d’AC/FA récente bien tolérée ?

Pluymaekers, et al.

March 18, 2019

DOI: 10.1056/NEJMoa1900353

Background

Patients with recent-onset atrial fibrillation commonly undergo immediate restoration of sinus rhythm by pharmacologic or electrical cardioversion. However, whether immediate restoration of sinus rhythm is necessary is not known, since atrial fibrillation often terminates spontaneously.

Methods

In a multicenter, randomized, open-label, noninferiority trial, we randomly assigned patients with hemodynamically stable, recent-onset (<36 hours), symptomatic atrial fibrillation in the emergency department to be treated with a wait-and-see approach (delayed-cardioversion group) or early cardioversion. The wait-and-see approach involved initial treatment with rate-control medication only and delayed cardioversion if the atrial fibrillation did not resolve within 48 hours. The primary end point was the presence of sinus rhythm at 4 weeks. Noninferiority would be shown if the lower limit of the 95% confidence interval for the between-group difference in the primary end point in percentage points was more than −10.

Results

The presence of sinus rhythm at 4 weeks occurred in 193 of 212 patients (91%) in the delayed-cardioversion group and in 202 of 215 (94%) in the early-cardioversion group (between-group difference, −2.9 percentage points; 95% confidence interval [CI], −8.2 to 2.2; P=0.005 for noninferiority). In the delayed-cardioversion group, conversion to sinus rhythm within 48 hours occurred spontaneously in 150 of 218 patients (69%) and after delayed cardioversion in 61 patients (28%). In the early-cardioversion group, conversion to sinus rhythm occurred spontaneously before the initiation of cardioversion in 36 of 219 patients (16%) and after cardioversion in 171 patients (78%). Among the patients who completed remote monitoring during 4 weeks of follow-up, a recurrence of atrial fibrillation occurred in 49 of 164 patients (30%) in the delayed-cardioversion group and in 50 of 171 (29%) in the early-cardioversion group. Within 4 weeks after randomization, cardiovascular complications occurred in 10 patients and 8 patients, respectively.

Conclusions

In patients presenting to the emergency department with recent-onset, symptomatic atrial fibrillation, a wait-and-see approach was noninferior to early cardioversion in achieving a return to sinus rhythm at 4 weeks.

Andexanet Alpha, l’antidote aux anticoagulants apixaban et rivaroxaban

Connolly,et al.

https://www.nejm.org/doi/full/10.1056/NEJMoa1814051

N Engl J Med 2019; 380:1326-1335

DOI: 10.1056/NEJMoa1814051

Background

Andexanet alfa is a modified recombinant inactive form of human factor Xa developed for reversal of factor Xa inhibitors.

Methods

We evaluated 352 patients who had acute major bleeding within 18 hours after administration of a factor Xa inhibitor. The patients received a bolus of andexanet, followed by a 2-hour infusion. The coprimary outcomes were the percent change in anti–factor Xa activity after andexanet treatment and the percentage of patients with excellent or good hemostatic efficacy at 12 hours after the end of the infusion, with hemostatic efficacy adjudicated on the basis of prespecified criteria. Efficacy was assessed in the subgroup of patients with confirmed major bleeding and baseline anti–factor Xa activity of at least 75 ng per milliliter (or ≥0.25 IU per milliliter for those receiving enoxaparin).

Results

Patients had a mean age of 77 years, and most had substantial cardiovascular disease. Bleeding was predominantly intracranial (in 227 patients [64%]) or gastrointestinal (in 90 patients [26%]). In patients who had received apixaban, the median anti–factor Xa activity decreased from 149.7 ng per milliliter at baseline to 11.1 ng per milliliter after the andexanet bolus (92% reduction; 95% confidence interval [CI], 91 to 93); in patients who had received rivaroxaban, the median value decreased from 211.8 ng per milliliter to 14.2 ng per milliliter (92% reduction; 95% CI, 88 to 94). Excellent or good hemostasis occurred in 204 of 249 patients (82%) who could be evaluated. Within 30 days, death occurred in 49 patients (14%) and a thrombotic event in 34 (10%). Reduction in anti–factor Xa activity was not predictive of hemostatic efficacy overall but was modestly predictive in patients with intracranial hemorrhage.

Conclusions

In patients with acute major bleeding associated with the use of a factor Xa inhibitor, treatment with andexanet markedly reduced anti–factor Xa activity, and 82% of patients had excellent or good hemostatic efficacy at 12 hours, as adjudicated according to prespecified criteria.

Transplantations cardiaque et pulmonaire de donneurs virémiques à VHC : Safe ?

Ann E. Woolley, et al.

https://www.nejm.org/doi/full/10.1056/NEJMoa1812406

N Engl J Med 2019; 380:1606-1617

DOI: 10.1056/NEJMoa1812406

BACKGROUND

Hearts and lungs from donors with hepatitis C viremia are typically not transplanted. The advent of direct-acting antiviral agents to treat hepatitis C virus (HCV) infection has raised the possibility of substantially increasing the donor organ pool by enabling the transplantation of hearts and lungs from HCV-infected donors into recipients who do not have HCV infection.

METHODS

We conducted a trial involving transplantation of hearts and lungs from donors who had hepatitis C viremia, irrespective of HCV genotype, to adults without HCV infection. Sofosbuvir–velpatasvir, a pangenotypic direct-acting antiviral regimen, was preemptively administered to the organ recipients for 4 weeks, beginning within a few hours after transplantation, to block viral replication. The primary outcome was a composite of a sustained virologic response at 12 weeks after completion of antiviral therapy for HCV infection and graft survival at 6 months after transplantation.

RESULTS

A total of 44 patients were enrolled: 36 received lung transplants and 8 received heart transplants. The median viral load in the HCV-infected donors was 890,000 IU per milliliter (interquartile range, 276,000 to 4.63 million). The HCV genotypes were genotype 1 (in 61% of the donors), genotype 2 (in 17%), genotype 3 (in 17%), and indeterminate (in 5%). A total of 42 of 44 recipients (95%) had a detectable hepatitis C viral load immediately after transplantation, with a median of 1800 IU per milliliter (interquartile range, 800 to 6180). Of the first 35 patients enrolled who had completed 6 months of follow-up, all 35 patients (100%; exact 95% confidence interval, 90 to 100) were alive and had excellent graft function and an undetectable hepatitis C viral load at 6 months after transplantation; the viral load became undetectable by approximately 2 weeks after transplantation, and it subsequently remained undetectable in all patients. No treatment-related serious adverse events were identified. More cases of acute cellular rejection for which treatment was indicated occurred in the HCV-infected lung-transplant recipients than in a cohort of patients who received lung transplants from donors who did not have HCV infection. This difference was not significant after adjustment for possible confounders.

CONCLUSIONS

In patients without HCV infection who received a heart or lung transplant from donors with hepatitis C viremia, treatment with an antiviral regimen for 4 weeks, initiated within a few hours after transplantation, prevented the establishment of HCV infection.

Revue sur les poisons et produits chimiques avec antidotes

Fred M. Henretig, M.D., Mark A. Kirk, M.D., and Charles A. McKay, Jr., M.D.

https://www.nejm.org/doi/full/10.1056/NEJMra1504690

DOI: 10.1056/NEJMra1504690

Centres de bien-être au travail en Santé ?

https://jamanetwork.com/journals/jama/fullarticle/2730614

Importance Employers have increasingly invested in workplace wellness programs to improve employee health and decrease health care costs. However, there is little experimental evidence on the effects of these programs.

Objective To evaluate a multicomponent workplace wellness program resembling programs offered by US employers.

Design, Setting, and Participants This clustered randomized trial was implemented at 160 worksites from January 2015 through June 2016. Administrative claims and employment data were gathered continuously through June 30, 2016; data from surveys and biometrics were collected from July 1, 2016, through August 31, 2016.

Interventions There were 20 randomly selected treatment worksites (4037 employees) and 140 randomly selected control worksites (28 937 employees, including 20 primary control worksites [4106 employees]). Control worksites received no wellness programming. The program comprised 8 modules focused on nutrition, physical activity, stress reduction, and related topics implemented by registered dietitians at the treatment worksites.

Main Outcomes and Measures Four outcome domains were assessed. Self-reported health and behaviors via surveys (29 outcomes) and clinical measures of health via screenings (10 outcomes) were compared among 20 intervention and 20 primary control sites; health care spending and utilization (38 outcomes) and employment outcomes (3 outcomes) from administrative data were compared among 20 intervention and 140 control sites.

Results Among 32 974 employees (mean [SD] age, 38.6 [15.2] years; 15 272 [45.9%] women), the mean participation rate in surveys and screenings at intervention sites was 36.2% to 44.6% (n = 4037 employees) and at primary control sites was 34.4% to 43.0% (n = 4106 employees) (mean of 1.3 program modules completed). After 18 months, the rates for 2 self-reported outcomes were higher in the intervention group than in the control group: for engaging in regular exercise (69.8% vs 61.9%; adjusted difference, 8.3 percentage points [95% CI, 3.9-12.8]; adjusted P = .03) and for actively managing weight (69.2% vs 54.7%; adjusted difference, 13.6 percentage points [95% CI, 7.1-20.2]; adjusted P = .02). The program had no significant effects on other prespecified outcomes: 27 self-reported health outcomes and behaviors (including self-reported health, sleep quality, and food choices), 10 clinical markers of health (including cholesterol, blood pressure, and body mass index), 38 medical and pharmaceutical spending and utilization measures, and 3 employment outcomes (absenteeism, job tenure, and job performance).

Conclusions and Relevance Among employees of a large US warehouse retail company, a workplace wellness program resulted in significantly greater rates of some positive self-reported health behaviors among those exposed compared with employees who were not exposed, but there were no significant differences in clinical measures of health, health care spending and utilization, and employment outcomes after 18 months. Although limited by incomplete data on some outcomes, these findings may temper expectations about the financial return on investment that wellness programs can deliver in the short term.

Impact des durées et du type d’antibioprophylaxie

https://jamanetwork.com/journals/jamasurgery/fullarticle/2731307

Importance The benefits of antimicrobial prophylaxis are limited to the first 24 hours postoperatively. Little is known about the harms associated with continuing antimicrobial prophylaxis after skin closure.

Objective To characterize the association of type and duration of prophylaxis with surgical site infection (SSI), acute kidney injury (AKI), and Clostridium difficile infection.

Design, Setting, and Participants In this multicenter, national retrospective cohort study, all patients within the national Veterans Affairs health care system who underwent cardiac, orthopedic total joint replacement, colorectal, and vascular procedures and who received planned manual review by a trained nurse reviewer for type and duration of surgical prophylaxis and for SSI from October 1, 2008, to September 30, 2013, were included. Data were analyzed using multivariable logistic regression, with adjustments for covariates determined a priori to be associated with the outcomes of interest. Data were analyzed from December 2016 to December 2018.

Exposures Duration of postoperative antimicrobial prophylaxis (<24 hours, 24-<48 hours, 48-<72 hours, and ≥72 hours).

Main Outcomes and Measures Surgical site infection, AKI, and C difficileinfection.

Results Of the 79 058 included patients, 76 109 (96.3%) were men, and the mean (SD) age was 64.8 (9.4) years. Among 79 058 surgical procedures in the cohort, all had SSI and C difficile outcome data available; 71 344 (90.2%) had AKI outcome data. After stratification by type of surgery and adjustment for age, sex, race, diabetes, smoking, American Society of Anesthesiologists score greater than 2, methicillin-resistant Staphylococcus aureus colonization, mupirocin, type of prophylaxis, and facility factors, SSI was not associated with duration of prophylaxis. Adjusted odds of AKI increased with each additional day of prophylaxis (cardiac procedure: 24-<48 hours: adjusted odds ratio [aOR], 1.03; 95% CI, 0.95-1.12; 48-<72 hours: aOR, 1.22; 95% CI, 1.08-1.39; ≥72 hours: aOR, 1.82; 95% CI, 1.54-2.16; noncardiac procedure: 24-<48 hours: aOR, 1.31; 95% CI, 1.21-1.42; 48-<72 hours: aOR, 1.72; 95% CI, 1.47-2.01; ≥72 hours: aOR, 1.79; 95% CI, 1.27-2.53). The risk of postoperative C difficile infection demonstrated a similar duration-dependent association (24-<48 hours: aOR 1.08; 95% CI, 0.89-1.31; 48-<72 hours: aOR, 2.43; 95% CI, 1.80-3.27; ≥72 hours: aOR, 3.65; 95% CI, 2.40-5.53). The unadjusted numbers needed to harm for AKI after 24 to less than 48 hours, 48 to less than 72 hours, and 72 hours or more of postoperative prophylaxis were 9, 6, and 4, respectively; and 2000, 90, and 50 for C difficile infection, respectively. Vancomycin receipt was also a significant risk factor for AKI (cardiac procedure: aOR, 1.17; 95% CI, 1.10-1.25; noncardiac procedure: aOR, 1.21; 95% CI, 1.13-1.30).

Conclusions and Relevance Increasing duration of antimicrobial prophylaxis was associated with higher odds of AKI and C difficile infection in a duration-dependent fashion; extended duration did not lead to additional SSI reduction. These findings highlight the notion that every day matters and suggest that stewardship efforts to limit duration of prophylaxis have the potential to reduce adverse events without increasing SSI.

Patients à risque de Delirium post-opératoire : pas une raison pour récuser ?

https://jamanetwork.com/journals/jamasurgery/fullarticle/2720416

Importance Postoperative delirium is associated with decreases in long-term cognitive function in elderly populations.

Objective To determine whether postoperative delirium is associated with decreased long-term cognition in a younger, more heterogeneous population.

Design, Setting, and Participants A prospective cohort study was conducted at a single academic medical center (≥800 beds) in the southeastern United States from September 5, 2017, through January 15, 2018. A total of 191 patients aged 18 years or older who were English-speaking and were anticipated to require at least 1 night of hospital admission after a scheduled major nonemergent surgery were included. Prisoners, individuals without baseline cognitive assessments, and those who could not provide informed consent were excluded. Ninety-day follow-up assessments were performed on 135 patients (70.7%).

Exposures The primary exposure was postoperative delirium defined as any instance of delirium occurring 24 to 72 hours after an operation. Delirium was diagnosed by the research team using the Confusion Assessment Method (CAM).

Main Outcomes and Measures The primary outcome was change in cognition at 90 days after surgery compared with baseline, preoperative cognition. Cognition was measured using a telephone version of the Montreal Cognitive Assessment (T-MoCA) with cognitive impairment defined as a score less than 18 on a scale of 0 to 22.

Results Of the 191 patients included in the study, 110 (57.6%) were women; the mean (SD) age was 56.8 (16.7) years. For the primary outcome of interest, patients with and without delirium had a small increase in T-MoCA scores at 90 days compared with baseline on unadjusted analysis (with delirium, 0.69; 95% CI, −0.34 to 1.73 vs without delirium, 0.67; 95% CI, 0.17-1.16). The initial multivariate linear regression model included age, preoperative American Society of Anesthesiologists Physical Status Classification System score, preoperative cognitive impairment, and duration of anesthesia. Preoperative cognitive impairment proved to be the only notable confounder: when adjusted for preoperative cognitive impairment, patients with delirium had a 0.70-point greater decrease in 90-day T-MoCA scores than those without delirium compared with their respective baseline scores (with delirium, 0.16; 95% CI, −0.63 to 0.94 vs without delirium, 0.86; 95% CI, 0.40-1.33).

Conclusions and Relevance Although a statistically significant association between 90-day cognition and postoperative delirium was not noted, patients with preoperative cognitive impairment appeared to have improvements in cognition 90 days after surgery; however, this finding was attenuated if they became delirious. Preoperative cognitive impairment alone should not preclude patients from undergoing indicated surgical procedures.

Ablation de FA versus traitement médical : meilleure qualité de vie mais NS sur des critères durs (mortalité, saignements…)

Etude CABANA :

- https://jamanetwork.com/journals/jama/fullarticle/2728676

- https://jamanetwork.com/journals/jama/fullarticle/2728675

Impact de l’anesthésie sur le pronostic carcinologique, où en est-on ?

https://jamanetwork.com/journals/jamasurgery/fullarticle/2720418

Deep Learning en Médecine, où en est-on ?

https://jamanetwork.com/journals/jamainternalmedicine/fullarticle/2718342

Recommandations américaines sur le TAVI pour les RAC serrés

https://jamanetwork.com/journals/jama/fullarticle/2729546

Identification du sous-groupe de SDRA d’amélioration rapide

https://journal.chestnet.org/article/S0012-3692(18)32582-0/fulltext

Edward J. Schenck, et al., Chest, 2019

DOI: https://doi.org/10.1016/j.chest.2018.09.031

Background

Observational studies suggest that some patients meeting criteria for ARDS no longer fulfill the oxygenation criterion early in the course of their illness. This subphenotype of rapidly improving ARDS has not been well characterized. We attempted to assess the prevalence, characteristics, and outcomes of rapidly improving ARDS and to identify which variables are useful to predict it.

Methods

A secondary analysis was performed of patient level data from six ARDS Network randomized controlled trials. We defined rapidly improving ARDS, contrasted with ARDS > 1 day, as extubation or a Pao2 to Fio2ratio (Pao2:Fio2) > 300 on the first study day following enrollment.

Results

The prevalence of rapidly improving ARDS was 10.5% (458 of 4,361 patients) and increased over time. Of the 1,909 patients enrolled in the three most recently published trials, 197 (10.3%) were extubated on the first study day, and 265 (13.9%) in total had rapidly improving ARDS. Patients with rapidly improving ARDS had lower baseline severity of illness and lower 60-day mortality (10.2% vs 26.3%; P < .0001) than ARDS > 1 day. Pao2:Fio2 at screening, change in Pao2:Fio2 from screening to enrollment, use of vasopressor agents, Fio2 at enrollment, and serum bilirubin levels were useful predictive variables.

Conclusions

Rapidly improving ARDS, mostly defined by early extubation, is an increasingly prevalent and distinct subphenotype, associated with better outcomes than ARDS > 1 day. Enrollment of patients with rapidly improving ARDS may negatively affect the prognostic enrichment and contribute to the failure of therapeutic trials.

Prostacycline pour les SDRA réfractaires persistants ?

https://journal.chestnet.org/article/S0012-3692(18)32784-3/fulltext

Revue sur le cœur droit dans les SDRA

https://journal.chestnet.org/article/S0012-3692(17)30263-5/fulltext

Les beta-bloquants pour prévenir les décompensations de cirrhose ?

Villanueva et al., Lancet, 2019

https://www.thelancet.com/journals/lancet/article/PIIS0140-6736(18)31875-0/fulltext

DOI:https://doi.org/10.1016/S0140-6736(18)31875-0

Background

Methods

Findings

Interpretation

Effets physiologiques de l’albumine chez le cirrhotique décompensé ?

https://www.gastrojournal.org/article/S0016-5085(19)33576-0/pdf

Incidence de l’insuffisance rénale aiguë d’un traitement probabiliste court par TAZO+VANCO

https://academic.oup.com/cid/article/68/9/1456/5079140

Nephrotoxins contribute to 20%–40% of acute kidney injury (AKI) cases in the intensive care unit (ICU). The combination of piperacillin-tazobactam (PTZ) and vancomycin (VAN) has been identified as nephrotoxic, but existing studies focus on extended durations of therapy rather than the brief empiric courses often used in the ICU. The current study was performed to compare the risk of AKI with a short course of PTZ/VAN to with the risk associated with other antipseudomonal β-lactam/VAN combinations.

The study included a retrospective cohort of 3299 ICU patients who received ≥24 but ≤72 hours of an antipseudomonal β-lactam/VAN combination: PTZ/VAN, cefepime (CEF)/VAN, or meropenem (MER)/VAN. The risk of developing stage 2 or 3 AKI was compared between antibiotic groups with multivariable logistic regression adjusted for relevant confounders. We also compared the risk of persistent kidney dysfunction, dialysis dependence, or death at 60 days between groups.

The overall incidence of stage 2 or 3 AKI was 9%. Brief exposure to PTZ/VAN did not confer a greater risk of stage 2 or 3 AKI after adjustment for relevant confounders (adjusted odds ratio [95% confidence interval] for PTZ/VAN vs CEF/VAN, 1.11 [.85–1.45]; PTZ/VAN vs MER/VAN, 1.04 [.71–1.42]). No significant differences were noted between groups at 60-day follow-up in the outcomes of persistent kidney dysfunction (P = .08), new dialysis dependence (P = .15), or death (P = .09).

Short courses of PTZ/VAN were not associated with a greater risk of short- or 60-day adverse renal outcomes than other empiric broad-spectrum combinations.

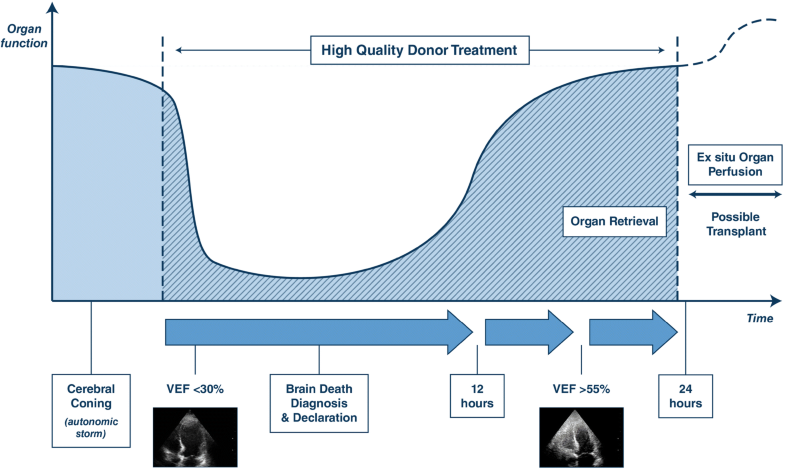

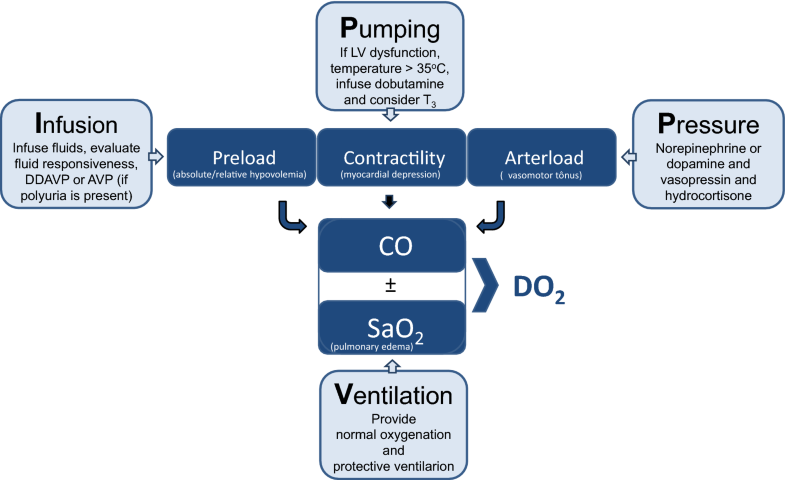

Revue sur le management des patients en état de mort encéphalique : Quand prélever ?

https://link.springer.com/article/10.1007%2Fs00134-019-05574-5

–> Optimisation par l’approche VIPP :

Diagnostic d’état de mort encéphalique sous ECMO ?

Thomas Bein| Thomas Müller| Giuseppe Citerio

Identification de 5 profils cardio-vasculaires dans le choc septique ?

Géri, et al., ICM, 2019

Purpose

Mechanisms of circulatory failure are complex and frequently intricate in septic shock. Better characterization could help to optimize hemodynamic support.

Methods

Two published prospective databases from 12 different ICUs including echocardiographic monitoring performed by a transesophageal route at the initial phase of septic shock were merged for post hoc analysis. Hierarchical clustering in a principal components approach was used to define cardiovascular phenotypes using clinical and echocardiographic parameters. Missing data were imputed.

Findings

A total of 360 patients (median age 64 [55; 74]) were included in the analysis. Five different clusters were defined: patients well resuscitated (cluster 1, n = 61, 16.9%) without left ventricular (LV) systolic dysfunction, right ventricular (RV) failure or fluid responsiveness, patients with LV systolic dysfunction (cluster 2, n = 64, 17.7%), patients with hyperkinetic profile (cluster 3, n = 84, 23.3%), patients with RV failure (cluster 4, n = 81, 22.5%) and patients with persistent hypovolemia (cluster 5, n = 70, 19.4%). Day 7 mortality was 9.8%, 32.8%, 8.3%, 27.2%, and 23.2%, while ICU mortality was 21.3%, 50.0%, 23.8%, 42.0%, and 38.6% in clusters 1, 2, 3, 4, and 5, respectively (p < 0.001 for both).

Conclusion

Our clustering approach on a large population of septic shock patients, based on clinical and echocardiographic parameters, was able to characterize five different cardiovascular phenotypes. How this could help physicians to optimize hemodynamic support should be evaluated in the future.

Méta-analyse sur l’insuffisance rénale aigue chez le polytraumatisés en réanimation

Søvik et al., ICM, 2019

Etat de l’art de la gestion d’infections chez le transplanté d’organe solide

JFT et al., ICM, 2019

Méta-analyse : Optiflow vs O2 conventionnelle dans les insuffisnace respiratoire aigue hypoxémique

Rochwerg et al., ICM, 2019

Background

This systematic review and meta-analysis summarizes the safety and efficacy of high flow nasal cannula (HFNC) in patients with acute hypoxemic respiratory failure.

Methods

We performed a comprehensive search of MEDLINE, EMBASE, and Web of Science. We identified randomized controlled trials that compared HFNC to conventional oxygen therapy. We pooled data and report summary estimates of effect using relative risk for dichotomous outcomes and mean difference or standardized mean difference for continuous outcomes, with 95% confidence intervals. We assessed risk of bias of included studies using the Cochrane tool and certainty in pooled effect estimates using GRADE methods.

Results

We included 9 RCTs (n = 2093 patients). We found no difference in mortality in patients treated with HFNC (relative risk [RR] 0.94, 95% confidence interval [CI] 0.67–1.31, moderate certainty) compared to conventional oxygen therapy. We found a decreased risk of requiring intubation (RR 0.85, 95% CI 0.74–0.99) or escalation of oxygen therapy (defined as crossover to HFNC in the control group, or initiation of non-invasive ventilation or invasive mechanical ventilation in either group) favouring HFNC-treated patients (RR 0.71, 95% CI 0.51–0.98), although certainty in both outcomes was low due to imprecision and issues related to risk of bias. HFNC had no effect on intensive care unit length of stay (mean difference [MD] 1.38 days more, 95% CI 0.90 days fewer to 3.66 days more, low certainty), hospital length of stay (MD 0.85 days fewer, 95% CI 2.07 days fewer to 0.37 days more, moderate certainty), patient reported comfort (SMD 0.12 lower, 95% CI 0.61 lower to 0.37 higher, very low certainty) or patient reported dyspnea (standardized mean difference [SMD] 0.16 lower, 95% CI 1.10 lower to 1.42 higher, low certainty). Complications of treatment were variably reported amongst included studies, but little harm was associated with HFNC use.

Conclusion

In patients with acute hypoxemic respiratory failure, HFNC may decrease the need for tracheal intubation without impacting mortality.

Acido-cétose : éviter les bolus d’insuline Rapide?

Objectives: Insulin infusion therapy is commonly used in the hospital setting to manage diabetic ketoacidosis and hyperosmolar hyperglycemic state. Clinical evidence suggests both hypoglycemia and glycemic variability negatively impact patient outcomes. The hypothesis of this study was that moderate-intensity insulin therapy decreases hospital length of stay and prevalence of hypoglycemia in patients with diabetic ketoacidosis and hyperosmolar hyperglycemic state.

Design: Pre-post study.

Setting: Large academic medical center in the United States.

Patients: Two-hundred one consecutive, nonpregnant, adult patients admitted for diabetic ketoacidosis and hyperosmolar hyperglycemic state between October 2010 and December 2014.

Interventions: High-intensity insulin therapy versus moderate-intensity insulin therapy. High-intensity insulin therapy was designed to rapidly normalize blood glucose levels with bolus doses of insulin and rapid insulin titration. Moderate-intensity insulin therapy was designed to mitigate glycemic variability and hypoglycemiathrough avoidance of bolus dosing, a liberalized blood glucose target, and gradual insulin titration.

Measurements and Main Results: Hospital and ICU length of stay were reduced by 23.6% and 38%, respectively. The relative risk of remaining in the hospital at day 7 (0.51; p = 0.022) and day 14 (0.28; p = 0.044) were significantly reduced by the moderate-intensity insulin therapy strategy. The relative risk of remaining in the ICU at 48 hours was significantly lower in the moderate-intensity insulin therapy cohort (0.34; p = 0.0048). The prevalence (35% vs 1%; p = 0.0003) and relative risk (0.028; p = 0.0004) of hypoglycemia were significantly lower in the moderate-intensity insulin therapy cohort. Glycemic variability decreased by 28.6% (p < 0.0001). There was no difference in the time to anion gap closure (p = 0.123).

Conclusions: Moderate-intensity insulin therapy for diabetic ketoacidosis and hyperosmolar hyperglycemic state resulted in improvements in hospital and ICU length of stay, which appeared to be associated with decreased glycemic variability.

Hypothermie dans le sepsis = mauvais pronostic ?

Objectives: To investigate the impact of body temperature on disease severity, implementation of sepsis bundles, and outcomes in severe sepsis patients.

Design: Retrospective sub-analysis.

Setting: Fifty-nine ICUs in Japan, from January 2016 to March 2017.

Patients: Adult patients with severe sepsis based on Sepsis-2 were enrolled and divided into three categories (body temperature < 36°C, 36–38°C, > 38°C), using the core body temperature at ICU admission.

Interventions: None.

Measurements and Main Results: Compliance with the bundles proposed in the Surviving Sepsis Campaign Guidelines 2012, in-hospital mortality, disposition after discharge, and the number of ICU and ventilator-free days were evaluated. Of 1,143 enrolled patients, 127, 565, and 451 were categorized as having body temperature less than 36°C, 36–38°C, and greater than 38°C, respectively. Hypothermia—body temperature less than 36°C—was observed in 11.1% of patients. Patients with hypothermia were significantly older than those with a body temperature of 36–38°C or greater than 38°C and had a lower body mass index and higher prevalence of septic shock than those with body temperature greater than 38°C. Acute Physiology and Chronic Health Evaluation II and Sequential Organ Failure Assessment scores on the day of enrollment were also significantly higher in hypothermia patients. Implementation rates of the entire 3-hour bundle and administration of broad-spectrum antibiotics significantly differed across categories; implementation rates were significantly lower in patients with body temperature less than 36°C than in those with body temperature greater than 38°C. Implementation rate of the entire 3-hour resuscitation bundle + vasopressor use + remeasured lactate significantly differed across categories, as did the in-hospital and 28-day mortality. The odds ratio for in-hospital mortality relative to the reference range of body temperature greater than 38°C was 1.760 (95% CI, 1.134–2.732) in the group with hypothermia. The proportions of ICU-free and ventilator-free days also significantly differed between categories and were significantly smaller in patients with hypothermia.

Conclusions: Hypothermia was associated with a significantly higher disease severity, mortality risk, and lower implementation of sepsis bundles.

Près de 50% d’erreurs médicales après transfert du patient de Réa vers un autre service ?

Objectives: To determine the point prevalence of medication errors at the time of transition of care from an ICU to non-ICU location and assess error types and risk factors for medication errors during transition of care.

Design: This was a multicenter, retrospective, 7-day point prevalence study.

Setting: Fifty-eight ICUs within 34 institutions in the United States and two in the Netherlands.

Patients: Nine-hundred eighty-five patients transferred from an ICU to non-ICU location.

Interventions: None.

Measurements and Main Results: Of 985 patients transferred, 450 (45.7%) had a medication error occur during transition of care. Among patients with a medication error, an average of 1.88 errors per patient (SD, 1.30; range, 1–9) occurred. The most common types of errors were continuation of medication with ICU-only indication (28.4%), untreated condition (19.4%), and pharmacotherapy without indication (11.9%). Seventy-five percent of errors reached the patient but did not cause harm. The occurrence of errors varied by type and size of institution and ICU. Renal replacement therapy during ICU stay and number of medications ordered following transfer were identified as factors associated with occurrence of error (odds ratio, 2.93; 95% CI, 1.42–6.05; odds ratio 1.08, 95% CI, 1.02–1.14, respectively). Orders for anti-infective (odds ratio, 1.66; 95% CI, 1.19–2.32), hematologic agents (1.75; 95% CI, 1.17–2.62), and IV fluids, electrolytes, or diuretics (odds ratio, 1.73; 95% CI, 1.21–2.48) at transition of care were associated with an increased odds of error. Factors associated with decreased odds of error included daily patient care rounds in the ICU (odds ratio, 0.15; 95% CI, 0.07–0.34) and orders discontinued and rewritten at the time of transfer from the ICU (odds ratio, 0.36; 95% CI, 0.17–0.73).

Conclusions: Nearly half of patients experienced medication errors at the time of transition of care from an ICU to non-ICU location. Most errors reached the patient but did not cause harm. This study identified risk factors upon which risk mitigation strategies should be focused.

Facteurs de risque d’hypertension intra-abdominale

Objectives: To identify the prevalence, risk factors, and outcomes of intra-abdominal hypertension in a mixed multicenter ICU population.

Design: Prospective observational study.

Setting: Fifteen ICUs worldwide.

Patients: Consecutive adult ICU patients with a bladder catheter.

Interventions: None.

Measurements and Main Results: Four hundred ninety-one patients were included. Intra-abdominal pressure was measured a minimum of every 8 hours. Subjects with a mean intra-abdominal pressure equal to or greater than 12 mm Hg were defined as having intra-abdominal hypertension. Intra-abdominal hypertension was present in 34.0% of the patients on the day of ICU admission (159/467) and in 48.9% of the patients (240/491) during the observation period. The severity of intra-abdominal hypertension was as follows: grade I, 47.5%; grade II, 36.6%; grade III, 11.7%; and grade IV, 4.2%. The severity of intra-abdominal hypertension during the first 2 weeks of the ICU stay was identified as an independent predictor of 28- and 90-day mortality, whereas the presence of intra-abdominal hypertension on the day of ICU admission did not predict mortality.

-Body mass index,

– Acute Physiology and Chronic Health Evaluation II score greater than or equal to 18, presence of abdominal distension,

– absence of bowel sounds, and

– positive end-expiratory pressure greater than or equal to 7 cm H2O were independently associated with the development of intra-abdominal hypertension at any time during the observation period. In subjects without intra-abdominal hypertension on day 1, body mass index combined with daily positive fluid balance and positive end-expiratory pressure greater than or equal to 7 cm H2O (as documented on the day before intra-abdominal hypertension occurred) were associated with the development of intra-abdominal hypertension during the first week in the ICU.

Conclusions: In our mixed ICU patient cohort, intra-abdominal hypertension occurred in almost half of all subjects and was twice as prevalent in mechanically ventilated patients as in spontaneously breathing patients. Presence and severity of intra-abdominal hypertension during the observation period significantly and independently increased 28- and 90-day mortality. Five admission day variables were independently associated with the presence or development of intra-abdominal hypertension. Positive fluid balance was associated with the development of intra-abdominal hypertension after day 1.

Retour de l’Early Goal Directed Therapy chez les traumatisés crâniens ?

Objectives: To estimate the impact of goal-directed therapy on outcome after traumatic brain injury, our team applied goal-directed therapy to standardize care in patients with moderate to severe traumatic brain injury, who were enrolled in a large multicenter clinical trial.

Design: Planned secondary analysis of data from Progesterone for the Treatment of Traumatic Brain Injury III, a large, prospective, multicenter clinical trial.

Setting: Forty-two trauma centers within the Neurologic Emergencies Treatment Trials network.

Patients: Eight-hundred eighty-two patients were enrolled within 4 hours of injury after nonpenetrating traumatic brain injury characterized by Glasgow Coma Scale score of 4–12.

Measurements and Main Results: Physiologic goals were defined a priori in order to standardize care across 42 sites participating in Progesterone for the Treatment of Traumatic Brain Injury III. Physiologic data collection occurred hourly; laboratory data were collected according to local ICU protocols and at a minimum of once per day. Physiologic transgressions were predefined as substantial deviations from the normal range of goal-directed therapy. Each hour where goal-directed therapy was not achieved was classified as a “transgression.” Data were adjudicated electronically and via expert review. Six-month outcomes included mortality and the stratified dichotomy of the Glasgow Outcome Scale-Extended. For each variable, the association between outcome and either: 1) the occurrence of a transgression or 2) the proportion of time spent in transgression was estimated via logistic regression model.

Results: For the 882 patients enrolled in Progesterone for the Treatment of Traumatic Brain Injury III, mortality was 12.5%. Prolonged time spent in transgression was associated with increased mortality in the full cohort for hemoglobin less than 8 gm/dL (p = 0.0006), international normalized ratio greater than 1.4 (p < 0.0001), glucose greater than 180 mg/dL (p = 0.0003), and systolic blood pressure less than 90 mm Hg (p < 0.0001). In the patient subgroup with intracranial pressure monitoring, prolonged time spent in transgression was associated with increased mortality for intracranial pressure greater than or equal to 20 mm Hg (p < 0.0001), glucose greater than 180 mg/dL (p = 0.0293), hemoglobin less than 8 gm/dL (p = 0.0220), or systolic blood pressure less than 90 mm Hg (p = 0.0114). Covariates inversely related to mortality included: a single occurrence of mean arterial pressure less than 65 mm Hg (p = 0.0051) or systolic blood pressure greater than 180 mm Hg (p = 0.0002).

Conclusions: The Progesterone for the Treatment of Traumatic Brain Injury III clinical trial rigorously monitored compliance with goal-directed therapy after traumatic brain injury. Multiple significant associations between physiologic transgressions, morbidity, and mortality were observed. These data suggest that effective goal-directed therapy in traumatic brain injury may provide an opportunity to improve patient outcomes.

Intérêt de l’EEG continu chez les traumatisés crâniens ?

Objectives: After traumatic brain injury, continuous electroencephalography is widely used to detect electrographic seizures. With the development of standardized continuous electroencephalography terminology, we aimed to describe the prevalence and burden of ictal-interictal patterns, including electrographic seizures after moderate-to-severe traumatic brain injury and to correlate continuous electroencephalography features with functional outcome.

Design: Post hoc analysis of the prospective, randomized controlled phase 2 multicenter INTREPID2566 study (ClinicalTrials.gov: NCT00805818). Continuous electroencephalography was initiated upon admission to the ICU. The primary outcome was the 3-month Glasgow Outcome Scale-Extended. Consensus electroencephalography reviews were performed by raters certified in standardized continuous electroencephalography terminology blinded to clinical data. Rhythmic, periodic, or ictal patterns were referred to as “ictal-interictal continuum”; severe ictal-interictal continuum was defined as greater than or equal to 1.5 Hz lateralized rhythmic delta activity or generalized periodic discharges and any lateralized periodic discharges or electrographic seizures.

Setting: Twenty U.S. level I trauma centers.

Patients: Patients with nonpenetrating traumatic brain injury and postresuscitation Glasgow Coma Scale score of 4–12 were included.

Interventions: None.

Measurements and Main Results: Among 152 patients with continuous electroencephalography (age 34 ± 14 yr; 88% male), 22 (14%) had severe ictal-interictal continuum including electrographic seizures in four (2.6%). Severe ictal-interictal continuum burden correlated with initial prognostic scores, including the International Mission for Prognosis and Analysis of Clinical Trials in Traumatic Brain Injury (r = 0.51; p = 0.01) and Injury Severity Score (r = 0.49; p = 0.01), but not with functional outcome. After controlling clinical covariates, unfavorable outcome was independently associated with absence of posterior dominant rhythm (common odds ratio, 3.38; 95% CI, 1.30–9.09), absence of N2 sleep transients (3.69; 1.69–8.20), predominant delta activity (2.82; 1.32–6.10), and discontinuous background (5.33; 2.28–12.96) within the first 72 hours of monitoring.

Conclusions: Severe ictal-interictal continuum patterns, including electrographic seizures, were associated with clinical markers of injury severity but not functional outcome in this prospective cohort of patients with moderate-to-severe traumatic brain injury. Importantly, continuous electroencephalography background features were independently associated with functional outcome and improved the area under the curve of existing, validated predictive models.

Précharge-dépendance associée à une atteinte de la micro-circulation ?

Bouattour et al., Anesthesiology 4 2019, Vol.130, 541-549.

doi:10.1097/ALN.0000000000002631

http://anesthesiology.pubs.asahq.org/article.aspx?articleid=2724943

Background: Dynamic indices, such as pulse pressure variation, detect preload dependence and are used to predict fluid responsiveness. The behavior of sublingual microcirculation during preload dependence is unknown during major abdominal surgery. The purpose of this study was to test the hypothesis that during abdominal surgery, microvascular perfusion is impaired during preload dependence and recovers after fluid administration.

Methods: This prospective observational study included patients having major abdominal surgery. Pulse pressure variation was used to identify preload dependence. A fluid challenge was performed when pulse pressure variation was greater than 13%. Macrocirculation variables (mean arterial pressure, heart rate, stroke volume index, and pulse pressure variation) and sublingual microcirculation variables (perfused vessel density, microvascular flow index, proportion of perfused vessels, and flow heterogeneity index) were recorded every 10 min.

Results: In 17 patients, who contributed 32 preload dependence episodes, the occurrence of preload dependence during major abdominal surgery was associated with a decrease in mean arterial pressure (72 ± 9 vs. 83 ± 15 mmHg [mean ± SD]; P = 0.016) and stroke volume index (36 ± 8 vs. 43 ± 8 ml/m2; P < 0.001) with a concomitant decrease in microvascular flow index (median [interquartile range], 2.33 [1.81, 2.75] vs. 2.84 [2.56, 2.88]; P = 0.009) and perfused vessel density (14.9 [12.0, 16.4] vs. 16.1 mm/mm2 [14.7, 21.4], P = 0.009), while heterogeneity index was increased from 0.2 (0.2, 0.4) to 0.5 (0.4, 0.7; P = 0.001). After fluid challenge, all microvascular parameters and the stroke volume index improved, while mean arterial pressure and heart rate remained unchanged.

Conclusions: Preload dependence was associated with reduced sublingual microcirculation during major abdominal surgery. Fluid administration successfully restored microvascular perfusion.

Une FiO2 élevée per-opératoire : pas plus de complications respiratoires ?

BACKGROUND The WHO recommends routine intra-operative and early postoperative use of high inspired oxygen concentrations (hyperoxia). However, a high intra-operative inspired oxygen fraction (FiO2) might result in an increased risk of postoperative respiratory complications.

AIM To test the hypothesis that intra-operative FiO2 of 80% compared with 30% inspired oxygen decreases the postoperative ratio of arterial saturation to fraction of inspired oxygen (SpO2/FiO2). Secondarily, to evaluate whether an intra-operative inspired FiO2 of 80% increases the incidence of pulmonary complications.

DESIGN Posthoc subanalysis of a large alternating cohort trial.

SETTING Cleveland Clinic, Cleveland, United States, from 2013 to 2016.

PATIENTS Adults having colorectal surgery. Cases lasting less than 2 h, re-operations on the same hospitalisation, and cases with missing intra-operative or postoperative data were excluded.

INTERVENTION Maintaining intra-operative FiO2 at 30 or 80% and alternating this management every 2 weeks for a study period of 39 months.

MAIN OUTCOME Minimal SpO2/FiO2 ratio value in the postanaesthesia care unit. Secondary outcome was a composite of postoperative pulmonary complications throughout hospitalisation.

RESULTS A total of 5056 patients were included. Groups were well balanced on all demographic, baseline and procedural variables. Median time-weighted averages of intra-operative FiO2 in the 30 and 80% groups were 43% (IQR 38 to 54%, N=2486) and 81% (IQR 78 to 82%, N=2570), respectively. No difference was found in the lowest SpO2/FiO2 ratio (estimated median difference 0 [95% confidence interval: 0, 0], P = 0.91). The incidence of postoperative pulmonary complications was 16.3 and 17.6% in the 30 and 80% FiO2 groups, respectively (relative risk 1.07 [95% confidence interval: 0.95, 1.21], P = 0.25).

CONCLUSION Intra-operative hyperoxia did not change the postoperative SpO2/FiO2 ratio or the risk for pulmonary complications. Clinicians should not refrain from using hyperoxia for fear of provoking respiratory complications.

OFA :

-

Pour :

-

Contre :

Etude randomisée contrôlée : Infiltration articulaire versus Bloc du canal des adducteurs pour les PTG

BACKGROUND Local infiltration anaesthesia (LIA) was introduced as an innovative analgesic procedure for enhanced recovery after primary total knee arthroplasty (TKA). However, LIA has never been compared with analgesia based on an adductor canal catheter and a single-shot sciatic nerve block.

OBJECTIVE To evaluate two analgesic regimens for TKA comparing mobility, postoperative pain and patient satisfaction.

DESIGN Two-group randomised, controlled clinical trial.

SETTING Charité-Universitätsmedizin Berlin, Campus Charité Mitte, Germany between April and August 2017.

PATIENTS Adults undergoing primary TKA under general anaesthesia were eligible for study participation. Exclusion criteria were heart insufficiency (New York Heart Association class >2), liver insufficiency (Child Pugh Score >B), evidence of diabetic polyneuropathy, severe obesity (BMI > 40 kg m−2), chronic opioid therapy for more than 3 months before scheduled surgery and allergy to local anaesthetics.

INTERVENTIONS Nerve block patients group (n=20) underwent surgery with two ultrasound-guided regional anaesthesia blocks: a single-shot sciatic nerve block with 20 ml of ropivacaine 0.75% combined with an adductor canal block with a catheter placed for less than 4 days with an infusion of ropivacaine 0.2% at a rate of 6 ml h−1. LIA patients (LIA group, n=20) received LIA of the knee capsule at the end of surgery with 150 ml of ropivacaine 0.2%.

MAIN OUTCOME MEASURES The primary endpoint was postoperative time to patient mobilisation (ability to walk) on the ward.

RESULTS Baseline characteristics were similar in each study group. Patients in both groups were mobilised to walk after TKA in similar time frames (LIA 24.0 h versus nerve block 27.1 h, 95% CI of difference −9.6 to 3.3 h). Maximum postoperative pain scores on exertion were higher in LIA patients with a mean 1.3 of 10 numerical rating scale points (95% CI 0.3 to 2.3, P = 0.010) as were intra-operative opioid requirements (LIA median 107 [IQR 100 to 268] mg versus nerve block median 78 [60 to 98] mg, P < 0.001). Patient satisfaction, postoperative oral morphine-equivalents and resting pain levels were comparable between groups. Anaesthesia induction time was reduced in LIA patients (LIA 10 min versus nerve block 35 min, 95% CI of difference 13 to 38 min, P < 0.001).

CONCLUSION Both analgesic regimens allow early mobilisation after TKA with high patient satisfaction. LIA shortened peri-operative time. Further research is required to optimise especially pain control during the later postoperative period with LIA.

ALR : Bolus intermittent versus débit continu : NS en chirurgie thoracique ?

BACKGROUND The analgesic benefits of programmed intermittent bolus infusion for thoracic paravertebral block remain unknown.

OBJECTIVE The aim of this study was to compare the analgesia from intermittent bolus infusion with that of a continuous infusion after thoracic paravertebral block.

DESIGN A randomised controlled study.

SETTING A single centre between December 2016 and November 2017. Seventy patients scheduled for video-assisted thoracoscopic surgery were included in the study.

INTERVENTION(S) Patients were randomly assigned to receive 0.2% levobupivacaine via continuous infusion (5 ml h−1, continuous group) or programmed intermittent bolus infusion (15 ml every 3 h, bolus group) after an initial 15-ml bolus injection of 0.2% levobupivacaine.

MAIN OUTCOME MEASURES The main outcome was the amount of rescue fentanyl (per kg of body weight) consumed within 24 h after surgery. Secondary outcomes were postoperative pain scores, plasma levobupivacaine concentrations and the number of dermatomes anaesthetised.

RESULTS There was no significant difference between the continuous and bolus groups in the postoperative consumption of fentanyl (median [interquartile range] 5.5 [4 to 9.5] μg kg−1 versus 6 [3.5 to 9] μg kg−1 respectively, P = 0.45) and postoperative pain scores within 24 h. At 20 h after initiating the infusions, there was no statistically significant difference between the two groups in terms of the plasma levobupivacaine concentration. The number of dermatomes anaesthetised to pinprick and cold testing was significantly greater in the bolus group.

CONCLUSION Our findings suggest that postoperative pain and opioid usage are similar with either programmed intermittent bolus infusion or continuous infusion after thoracic paravertebral block. Programmed intermittent bolus infusion provides a wider sensory blockade and could benefit patients requiring a wider extent of anaesthesia

29 avril 2019

29 avril 2019

Étiquettes :

Étiquettes :